Context Engineering is the new Prompt Engineering

Context engineering represents a pivotal discipline in the advancement of Generative AI, moving beyond the tactical limitations of prompt engineering to establish a comprehensive framework for AI performance. This systematic process involves laying down foundational rules, requirements, and contextual information, enabling Generative AI models to produce highly meaningful and relevant outputs. Key mechanisms, including In-Context Learning (ICL) for dynamic adaptation and Retrieval-Augmented Generation (RAG) for factual grounding, form the technical backbone of this approach. The integration of structured data is paramount for ensuring accuracy and traceability, especially in enterprise applications. While significant benefits such as enhanced accuracy, reduced hallucinations, and improved specificity are evident across diverse industries, challenges related to computational overhead, data complexities, and ethical considerations necessitate careful navigation. The trajectory of context engineering points towards increasingly autonomous and intelligent AI systems, underscoring its role as a strategic imperative for organizations seeking to unlock the full potential of Generative AI.

Introduction to Context Engineering in Generative AI

The emergence of Generative AI has revolutionized various industries, offering unprecedented capabilities in content creation and problem-solving. However, unlocking the full potential of these advanced models requires a sophisticated approach that extends beyond simple instruction-giving. This is where context engineering becomes a foundational discipline, providing the necessary framework for AI systems to operate effectively within complex, real-world environments.

Defining Context Engineering: The “Hive Architecture”

Context engineering is a systematic process through which human experts establish the foundational rules, requirements, and contextual information essential for Generative AI to produce meaningful and relevant output. This comprehensive process encompasses a range of critical tasks, including the analysis and mitigation of risks, meticulous pre-processing of data, precise definition of problem statements, and the setting of clear project goals. The depth of these activities indicates that context engineering is not merely a technical input method but rather a strategic design discipline. Its involvement in defining project objectives and addressing potential risks signifies a higher-level, pre-computation, and continuous management approach, elevating its importance to a core architectural and strategic component of AI system development.

To illustrate the collaborative dynamic at play, an analogy of a “hive architecture” is often employed. In this framework, Generative AI models are likened to “worker bees,” capable of efficiently producing coherent and functional output based on their training data, such as drafting emails or generating code snippets. However, these models inherently lack an understanding of the broader context in which these tasks are embedded, making their role more akin to an auxiliary tool than a primary decision-maker. Human domain experts, conversely, embody the “queen bees,” providing the critical insight and experience indispensable for any project’s success. These experts possess the unique ability to dissect complex problems into simpler, more manageable tasks that the Generative AI can then handle. Their specialized knowledge ensures that the generated tasks align precisely with the specific requirements and constraints of a project, and they can anticipate many domain-specific and project-related risks.

In this analogy, context engineering serves as the “hive architecture” itself—the systematic environment within which both the Generative AI (worker bee) and the human domain expert (queen bee) operate synergistically. This framework ensures that the AI’s capabilities are effectively guided and constrained by human intelligence and experience. The relationship between a human pilot and a copilot in an airplane provides a further parallel: the copilot assists, manages sub-tasks, and follows directions, but the pilot retains the primary role of flying the plane. Similarly, Generative AI, even advanced foundational models like GPT-4, functions as a “copilot” in fields such as software engineering. It can draft code, suggest algorithms, or optimize existing systems, but overarching architectural decisions and fine-tuning necessitate the nuanced understanding and decision-making abilities of a human expert. This perspective underscores that context engineering is fundamentally about designing effective human-AI collaboration frameworks, where the AI augments human capabilities rather than replacing them. This integrated, collaborative paradigm is essential for successful AI adoption.

Context Engineering vs. Prompt Engineering: A Paradigm Shift

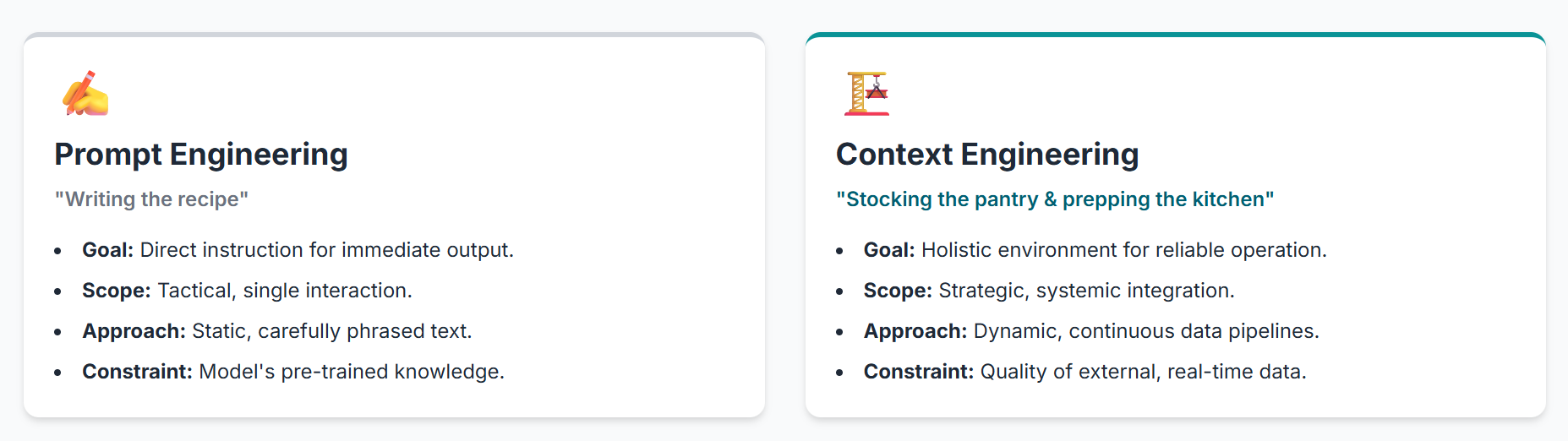

The evolution of Generative AI has brought about a significant shift in how human-AI interactions are conceptualized, moving from prompt engineering to a more holistic approach known as context engineering. Prompt engineering, in its essence, involves crafting carefully phrased, logically structured, and goal-directed instructions for the AI. It is often described as “writing the recipe” for the AI, providing static prompts to guide its output.

Context engineering, by contrast, represents a more comprehensive and dynamic system. It is akin to “stocking the pantry, prepping the key ingredients, and ensuring the model remembers what’s already been cooked”. The core objective is to ensure that the model possesses the “right information, at the right time, to reason and take meaningful action”. This system is inherently dynamic, meaning the logic for constructing the final prompt must be adaptive, rather than static. It encompasses a broad spectrum of inputs, including the system prompt (defining the AI’s persona), the user message, external information, tools, retrieved documents, and even implicit context such as the user’s identity, past interactions, and current intent.

The industry narrative has notably shifted from a focus on “prompt craft to context pipelines”. This transition signifies that the capabilities of Generative AI models are no longer primarily constrained by how cleverly instructions are phrased, but rather by how effectively they are prepared to understand the real-world context in which they operate. A meticulously designed prompt can still fail if the model lacks access to the necessary organizational context that renders the request relevant and actionable. This highlights a fundamental shift: prompt engineering is a tactical, one-off interaction, whereas context engineering implies a strategic, ongoing effort to build and maintain an intelligent information architecture around the LLM. The emphasis on “dynamic systems” and “context pipelines” suggests that the challenge lies not just in what to ask, but how to continuously feed and manage the vast, evolving information landscape within an enterprise. This necessitates robust data governance, integration, and real-time data flow capabilities.

A compelling illustration of context engineering in action is McKinsey’s internal Generative AI tool, Lilli. This tool unifies over 40 knowledge repositories and 100,000 documents into a single searchable graph. When a consultant poses a question, Lilli scans this extensive corpus, retrieves the five to seven most relevant artifacts, generates an executive summary, and even directs the consultant to the appropriate in-house experts for follow-up. This “retrieval-plus-synthesis loop” has achieved remarkable 72% firm-wide adoption and is saving teams up to 30% of the time previously spent sifting through various internal systems. The success of Lilli demonstrates that the decisive edge in Generative AI applications is not necessarily a larger model, but rather a “meticulously engineered stream of proprietary context”. This is a crucial observation: while access to powerful LLMs is becoming democratized, the ability to effectively engineer and leverage an organization’s unique, proprietary context (encompassing data, schema, code, business logic, and more) emerges as the true competitive advantage. This moves the focus from generic AI capabilities to domain-specific, enterprise-tailored AI solutions, where context engineering serves as the key enabler.

Key Differences:

Prompt Engineering vs. Context Engineering

| Aspect | Prompt Engineering | Context Engineering |

|---|---|---|

| Goal | Direct instruction for immediate output | Holistic environment for meaningful AI operation |

| Scope | Single interaction or short sequence | Systemic integration of diverse information |

| Approach | Static phrasing, careful word choice | Dynamic data pipeline, continuous context delivery |

| Focus | Input-centric, crafting the query | Information architecture, contextual relevance |

| Nature | Tactical, one-off | Strategic, ongoing, systemic |